The surge in AI adoption, coupled with low AI literacy and weak governance is creating a complex risk environment, with many organisations deploying AI without proper consideration to what is needed to ensure transparency, accountability and ethical oversight.

KPMG has partnered with the University of Melbourne to produce the most comprehensive global study into the public’s trust, use and attitudes towards AI.

This year, the survey included more than 48,000 people and has been expanded to 47 countries, to provide a deeper level of insight into the perceptions of AI across the globe.

Download report

Download our global survey which explores the trust, attitudes and use of artificial intelligence.

What is Australia’s attitude towards AI?

AI use is producing benefits at work, but also risks

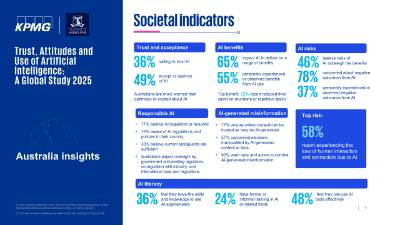

Two thirds (65%) of Australians report their employer uses AI, and 49% of employees say they are intentionally using AI on a regular basis.

Employees are reporting increased efficiency, effectiveness, access to information and innovation.

Almost half of employees (48%) admit to using AI in ways that contravene company policies. Many rely on AI output without evaluating accuracy (57%) and are making mistakes in their work due to AI (59%). A lot of employees also admit to hiding their use of AI at work and presenting AI-generated work as their own.

Only 30% of employees say their organisation has a policy on generative AI use.

Calls for greater governance

The research found strong public support for AI regulation with 77% of Australians agreeing regulation is necessary.

Only 30% believe current laws, regulation and safeguards are adequate to make AI use safe.

Australians expect international laws and regulation (76%), as well as oversight by the government and existing regulators (80%).

83% of Australians say they would be more willing to trust AI systems when assurances are in place, such as adherence to international AI standards, responsible AI governance practices, and monitoring system accuracy.

Australians are less trusting and positive about AI than most countries

As well as being wary of AI, Australia ranks among the lowest globally on acceptance, excitement and optimism about it, alongside New Zealand and the Netherlands.

Only 30% of Australians believe the benefits of AI outweigh the risks, the lowest ranking of any country.

Australians trail behind other countries in realising the benefits of AI (55% vs 73% globally report experiencing benefits).

"The public’s trust of AI technologies and their safe and secure use is central to acceptance and adoption. Yet our research reveals that 78% of Australians are concerned about a range of negative outcomes from the use of AI systems’ Professor Nicole Gillespie, Chair of Trust at Melbourne Business School at the University of Melbourne."

Professor Nicole Gillespie, Chair of Trust at Melbourne Business School at the University of Melbourne

Australia is lagging in AI literacy

Australians have amongst the lowest levels of AI training and education, with just 24% having undertaken AI-related training or education compared to 39% globally.

Over 60% report low knowledge of AI (48% globally), and under half (48%) believe they have the skills to use AI tools effectively (60% globally).

Australians also rank lowest globally in their interest in learning more about AI.

"An important foundation to building trust and unlocking the benefits of AI is developing literacy through accessible training, workplace support, and public education."

Professor Nicole Gillespie.

Understanding AI: The big picture

The KPMG and University of Melbourne Trust in AI Survey Report 2025 paints a complex picture of how people feel about AI.

While AI use is high, trust and literacy levels are quite varied among different countries, with emerging economies leading in both areas. Concerns about AI risks are prevalent, highlighting the need for effective regulation and governance.

Overall, there is a clear ambivalence towards AI. People appreciate its technical capabilities yet remain cautious about its safety and societal impact. This complex mix of feelings has led to moderate to low acceptance of AI and an increase in worry over time.

Given the huge potential of AI technologies, careful management and understanding public expectations around regulation and governance will be pivotal in guiding its responsible development and use.

Download: Trust in artificial intelligence

About the research

The team behind the research

Professor Nicole Gillespie, Dr Steve Lockey, Alexandria Macdade, Tabi Ward, and Gerard Hassed.

The University of Melbourne research team led the design, conduct, data collection, analysis, and reporting of this research.

Acknowledgments

Advisory group: James Mabbott, Jessica Wyndham, Nicola Stone, Sam Gloede, Dan Konigsburg, Sam Burns, Kathryn Wright, Melany Eli, Rita Fentener van Vlissingen, David Rowlands, Laurent Gobbi, Rene Vader, Adrian Clamp, Jane Lawrie, Jessica Seddon, Ed O’Brien, Kristin Silva, and Richard Boele.

We are grateful for the insightful expert input and feedback provided at various stages of the research by Ali Akbari, Nick Davis, Shazia Sadiq, Ed Santow, Tapani Rinta-Kahila, Alice Rickert, Lucy Kenyon-Jones, Morteza Namvar, Olya Ohrimenko, Saeed Akhlaghpour, Chris Ziguras, Sam Forsyth, Geoff Dober, Giles Hirst, and Madhava Jay.

We appreciate the data analysis support provided by Jake Morrill.

Report production: Kathryn Wright, Melany Eli, Bethany Fracassi, Nancy Stewart, Yong Dithavong, Marty Scerri and Lachlan Hardisty.

Citation

Gillespie, N., Lockey, S., Ward, T., Macdade, A., Hassed, G. (2025). Trust, Attitudes and Use of Artificial Intelligence: A Global Study 2025. The University of Melbourne and KPMG.

Funding

This research was supported by the Chair in Trust research partnership between the University of Melbourne and KPMG Australia, and funding from KPMG International, KPMG Australia, and the University of Melbourne.

The research was conducted independently by the university research team.

Lead researcher & key contact

The University of Melbourne

Trusted AI: How KPMG can help

Keeping up with the rapid pace of AI evolution can be daunting, but KPMG’s AI Consulting Services and Trusted AI Framework can empower organisations to stay competitive and responsibly embrace AI transformation.

We blend our deep risk management skills with the latest digital solutions for ethical and responsible AI implementation. This strategic support helps organisations grow, innovate, and address complex challenges while prioritising human-centric values.

Our commitment to ethical AI fosters operational efficiency and strategic growth, always ensuring alignment with core business values of transparency, innovation, and ethical governance.

Contact KPMG's key leaders

Related AI insights

FAQs

-

What is trust in artificial intelligence? Trust in artificial intelligence (AI) refers to the willingness to rely on an AI system based on positive expectations of its performance and ethical behaviour. This includes the system’s technical ability to provide accurate and reliable outputs, as well as its safety, security, and ethical use. Trust is crucial because it underpins the acceptance and sustained adoption of AI systems.

-

What is people’s perception of AI taking over the workforce? People have mixed perceptions about AI taking over the workforce. While many recognise the efficiency and innovation AI can bring, there are significant concerns about job loss, deskilling, and dependency on AI.

Almost half of the respondents believe AI will eliminate more jobs than it will create, and many are worried about being left behind if they don’t use AI at work. However, there is also a recognition of the potential benefits, such as improved efficiency and decision-making.

-

Has people’s attitude towards AI changed over time? Yes, people's attitudes towards AI have changed over time. Trust in AI has declined since the release of Gen AI, with increased concerns about its risks and impacts. However, many still recognise its benefits, such as increased efficiency and innovation.

-

What are the benefits and challenges of AI in the Australian workplace? Fifty percent of respondents report increased efficiency, quality of work, and innovation due to AI. However, there are also concerns about increased time spent on repetitive tasks, compliance risks, and job security.

-

How do Australians feel about AI regulation? A majority (70%) of Australians surveyed believe AI regulation is necessary. And only 30% believe the current regulations and laws governing AI are sufficient to make AI use safe and protect people from harm.

Additionally, Australians are highly concerned about AI-generated misinformation. About 87% of respondents want laws and actions to combat AI-generated misinformation. About 90% of respondents globally, including in Australia, agree there should be international laws and regulations.

-

How was the research conducted? The research was conducted using an online survey completed by representative research panels in each country between November 2024 and mid-January 2025. The study surveyed 48,340 people across 47 countries, covering all global geographical regions.